Smarten up your APIs

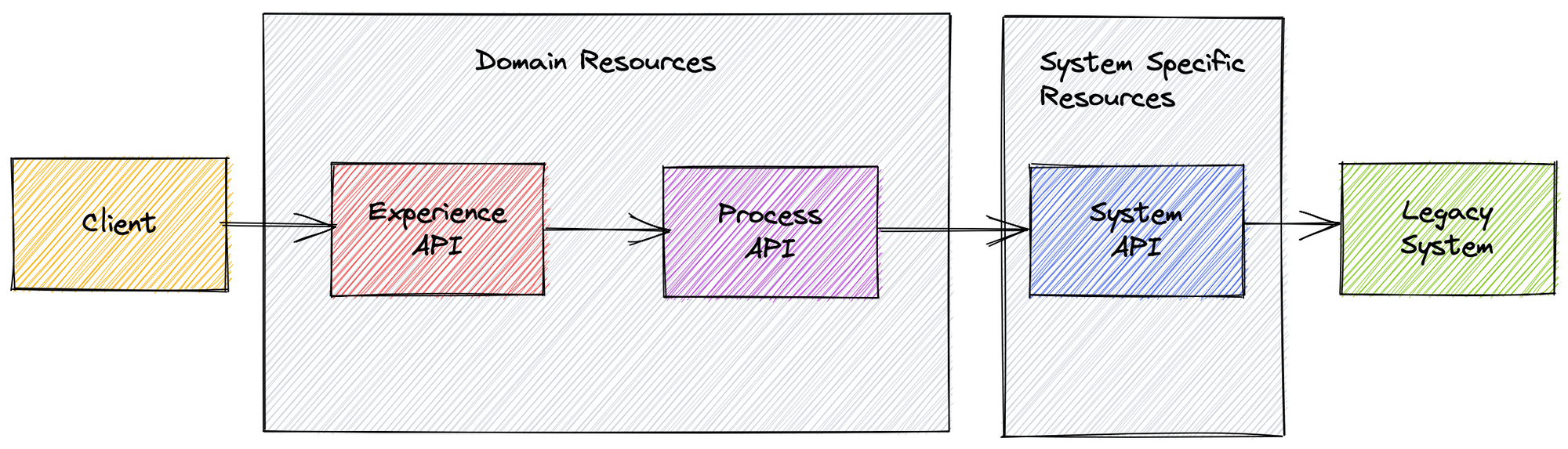

APIs that expose data and business processes belonging to legacy systems were the first wave of API enablement. Most projects usually end up looking like the following:

System API

While the legacy system contains critical application logic and data, its usage can be modernised by exposing a system API. The resources exposed by this API will usually mirror closely that of the underlying system, with the opportunity to reduce conceptual and vendor specific noise present in existing data and processes.

Domain APIs

The Process and Experience APIs will introdroduce a more refined domain language. The Process API aims at breaking down silos, providing a broad set of resources that can be used across different applications and domains. The Experience API can aggregate and distill across a number of resources to provide an API specific to the calling applications requirements.

Leveling Up Maturity

While following these patterns when complexity is low, you could end up with an end to end set of data mappers, which can be overkill when servicing a limited number of domains. On the other end of the scale, rather than becoming an "anaemic domain model" these sets of APIs can become very complex, embedding important concepts and processes within the data transformation layer.

Protecting your domain knowledge as your APIs evolve

As a rule of thumb, when you frequently see two or more data points and some logic per transformation, this is usually an indicator that these business concepts should be externalised to a rules engine. A rules engine can allow the system owners to quickly and efficiently upload their domain knowledge in parallel with the API layers lifecycle. Building out the respective layers becomes a much smaller and iterative approach.

While carefully designed resource representations will continue to add value in defining a domain API, using a rules engine in addition to data transformation approach is a key component in leveling up your API transformation.